Managing infrastructure is a core requirement for most modern applications. A large number of hardware must be managed correctly in order for the service created to work efficiently and stably when a software company is established. It is necessary to ensure the synchronous operation of multiple hardware such as installing a cabinet, configuring servers, network and security devices, making correct cabling, ensuring the integration of the application or service to be written with the already running systems, planning the working structures of the cooling, energy and backup systems.

System integration of software as a service and proper operation in appropriate environments are stated in the job description of the DevOps team. DevOps consists of processes, ideas, and techniques that belong entirely to integration processes. Almost all operations to be designed and implemented at this stage are performed by entering manual commands. Even in a perfect design, if the structure remains the same size, there is not much problem. However, the growth of the software that provides the service or the team that provides the service will cause both the number of hardware and the number of integrations to increase. Even though it is a system built on solid ground, it is not a practical solution due to the troublesome and unpredictable workload. Over time, it prepares the environment for new problems such as the inability of the teams to follow the structure or the slowdown of the system. As a result, the number of errors is increasing and the interruptions and downtimes they cause are becoming more frequent. It is inevitable that more than one project will come to the same point and companies will be cornered at this point.

There are radical changes that will change the traditional structure with the rapidly developing technology. To give an example of this situation, companies prefer virtualization options such as network virtualization, virtual computer use, or cloud platform by moving away from the hardware architectures they have created by allocating large budgets. At this point, companies purchase a cloud platform service (AWS, CloudFlare, Oracle Cloud, GPC, Docker, DigitalOcean, etc.) by using virtualization technologies in a system room that they have built themselves, or without even setting up a system room. Thus, it gets rid of the cost allocated to the large equipment used and alleviates the workload on many issues such as the management, security, architecture, and detailed configuration of the used equipment.

At this point, instead of investing heavily in hardware, by using integration tools such as Chef, Puppet, Terraform, Docker, Jenkins, and Kubernetes, the workload of DevOps teams is alleviated and they spend their time working on the system integration of software and software. As a result, both software development and operations teams spend most of their time working on software and systems integration. The key point here is to be able to provide all processes with certain automation. However, there are still points where the integration tools mentioned above are insufficient and create a workload. This is where Terraform comes into play.

TERRAFORM

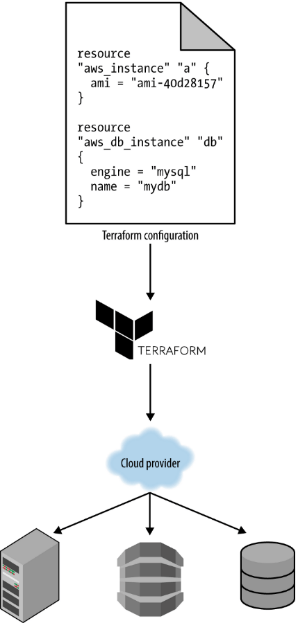

Terraform is an open source IaC (Infrastructure as Code) tool used by DevOps teams, developed by HashiCorp using the GO language, to enable and improve network infrastructures to be defined as code in a secure and efficient way. Although it is an application that converts the IT infrastructure into code and manages it through programming, it works independently from the cloud and therefore provides ease of management to the teams during the integration stages. It allows it to be used in any supported environment without being tied to any vendor, product, or type of integration or having to learn new platform-specific tools.

The advantage of using the Infrastructure as a Code structure is that the same service can be offered on different platforms. AWS “CloudFormation”, Azure “Resource Manager”, and Google Cloud “Deployment Manager” can be given as examples of different cloud platforms used at this point. In designing the system and network infrastructure as code for applications or services to be used in the network, it is of great importance that DevOps teams comply with IaC rules as well as their integration.

There are different tools available to enable IaC. Tools such as Chef, Puppet, Ansible, Pulumi, and SaltStack are all Configuration Management tools and work in a multi-vendor structure. It is very easy to manage network products from a common point with certain scripts through these products.

However, in terms of their task, these tools are designed to install, manage and develop software only on existing infrastructure, that is, on installed servers. Terraform operates as a purely declarative tool, while Ansible or Puppet combines both declarative and procedural configurations. In the procedural configuration, the steps used to construct the infrastructure in a preferred way are precisely specified and specified manually. Procedural configuration brings more workload because it has specific procedures and manual inputs or controls. However, the procedural configuration provides more control over systems. However, Terraform is preferred more by businesses because procedural applications have processes close to manual tracking and increase the workload. In addition, with Terraform, infrastructure can be established from scratch and managed and its full life cycle can be completed but the other vehicles do not have such a capability.

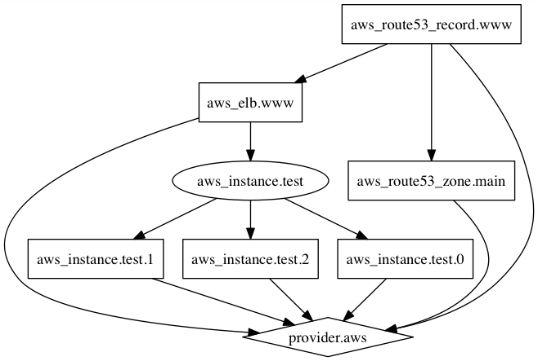

Terraform is offered as an Orchestration tool that has been developed as a common product for services using different cloud platforms, to automate its infrastructure within the company or for companies with different cloud platforms, and allows services to be offered from a single point to run in the environment of all manufacturers. It enables one to manage not only cloud platforms but also on-prem environments (Vmware, OpenStack) within the network with a few commands. In this way, it means that it is designed to create the server environment in which services or applications can be installed, managed, or developed. The biggest reason for Terraform to be preferred is that it can work in partnership with more than one manufacturer and service, and the designed object structures are immutable. In this way, a designed product can offer the same service by creating the same infrastructure over cloud platforms (AWS, Google, iCloud, Azure) served by different manufacturers. A visual graph of the infrastructure can be created, thus making it very easy to follow the structure to be managed via using Terraform. Additionally, it can manage low-level components such as compute, storage, and network resources, as well as high-level components such as DNS entries and SaaS and PaaS.

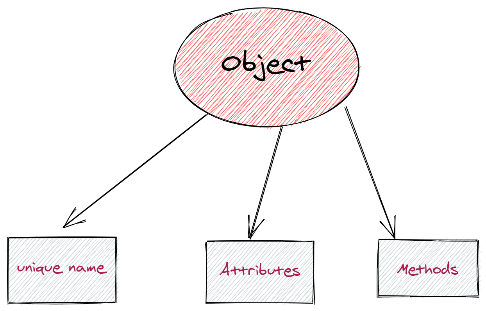

The concept of Immutable mentioned here is defined as a constant whose value cannot be changed after an object is created in the software. Examples of immutable data types are types such as string, integer, double, and byte. Mutable objects, on the other hand, are defined as variables whose value can be changed after the object is created, depending on time, application, state, or optionally.

Terraform code is quite simple, plain, and readable. Infrastructure configurations are defined and implemented using a JSON which is like a configuration language called HCL (HashiCorp Configuration Language). This is due to Terraform’s IaC declarative code approach. In most of the code, only an action for the desired result is specified, and sub-implementations are not dealt with. Because Terraform has open source code, it is always developed by software application development teams or legal entities from all over the world by writing new plugins or compiling different versions of existing plugins.

Terraform is currently used on Mac OS, FreeBSD, Linux, OpenBSD, Solaris, and Windows operating systems. In addition, ARM microprocessor support is available on FreeBSD and Linux operating systems.

HashiCorp offers a managed solution called Terraform Cloud. It provides a platform for users to manage infrastructure across all supported providers without the hassle of installing or managing Terraform itself.

There are 4 main features that come to mind when it comes to Terraform. These are;

- IaC: Simply put, IaC allows users to code their infrastructure. The purpose of automating the systems and managing the automation processes is to ensure that the integration stages are carried out with automatic processes as much as possible. Thus, instead of manual scripts or processes, processes are implemented sequentially with a step-by-step code group. How to make which API call on Terraform is also provided with IaC configurations. This stage is known as the IaC process. Infrastructure as Code (IaC) is an operation process that enables easy writing and management of code at multiple points, such as defining, deploying, updating, removing or versioning the existing infrastructure. It accelerates the processes as it eliminates the burden of manual processing. IaC minimizes the possibility of errors or deviations in infrastructure creation. Thus, it causes problems such as incorrect configurations or incorrect resource allocation to disappear. The code, which has become a standard, runs smoothly on almost all platforms. In this case, new infrastructure allocation or software testing becomes easily applicable in many cases such as scalability or reconfigurability. IaC allows infrastructure changes to be managed through a source control mechanism such as GIT and integrated as part of the CI/CD pipeline. It not only automates infrastructure changes but also facilitates auditable changes and easy rollback of changes as needed.

Although there are many benefits of using IaC, organizations do not prefer Terraform-like IaC solutions due to issues such as speed, accuracy, data visibility, and security. It is foreseen that this situation will be overcome thanks to its structure that improves itself day by day.

- Implementation Plans: The planning stage includes writing or changing the configuration files of the infrastructure in line with the decisions to be taken at the point of creation or transfer of the infrastructure. If the existing infrastructure is to be changed, the configuration files are also changed in this context and thus the desired situation is reported. When the structure is clarified and declared, Terraform creates an implementation plan for it thanks to a command. In the plan, it is stated what changes Terraform should make in order to configure the existing infrastructure and bring it to the specified state. At this stage, an algorithm that will ensure maximum efficiency with the least number of moves will be run in the background and the desired structure will be planned to be established with the least change. For complex infrastructures, the planning phase takes a longer time. The main reason for this is that API requests are made in order to ensure that the changes can be configured correctly and the current status information is requested from all components in the infrastructure. In order to solve this problem, the infrastructure is divided into parts and implementation plans are put into operation in the form of subsets. If the output resulting from the plan is not preferred, it is possible to go back and change the configuration. If the output of the plan is approved, Terraform is instructed to take necessary actions.

In case of any error during the application or if some plans go wrong, the running APIs will stop, but Terraform does not automatically return the infrastructure to the state it was before running the application. This is because the implementation depends on the plan. If the work plan does not require this action, no resource is deleted.

- Resource Graph: Terraform creates a resource graph that captures all dependency information available in the infrastructure. Dependencies are usually expressed naturally through configuration and do not need to create any loyalty schemes or specify dependencies. When there is no dependency on the result generated using the source graph, the changes are applied in parallel and the infrastructure is built as efficiently as possible. The graph created by Terraform can be printed and visualized to learn more about the infrastructure.

- Automation Change: Thanks to certain templates or schemas, new branches or infrastructure expansion requests can be easily created over infrastructure samples that have been created before. It is also possible to automatically implement plans such as updating the infrastructure to the latest version without any human intervention. However, manual approval can be provided by creating manager approval. With the implementation plan and resource chart created, it is quite easy to understand what will be processed and in what order.

Working Principle

Creating multiple configurations on a large scale, such as planning the infrastructure environment, preparing the server environments, manually installing the operating systems, and drawing the network topology, consists of a complex and high error rate environment. The simplest way to automate a process is to write a temporary script. The transactions are processed step by step and manually. In order for each of these steps to be defined in the code, a scripting language, and a server to execute this file are needed. At this stage, there are a series of operations such as installing dependencies, checking or testing, and running the codes.

The GO code installed in order to present the service on the backend is compiled in a single binary file called terraform. This binary program can be used to deploy infrastructure on almost any platform, starting from a laptop, a build server, or almost any other computer, and it does not need to run any additional infrastructure for this to happen. All communications and calls are initiated via API.

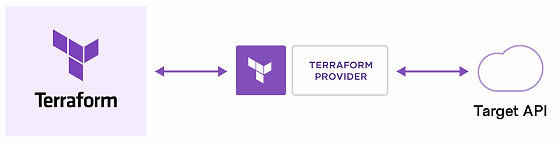

Terraform creates and manages resources in cloud platforms and other services through multiple APIs used in its structure. This allows simplifying the user experience without the need to specify the exact steps to design infrastructure with this resource state by providing only the needed resource information. The platform providers planned to establish the infrastructure to ensure that Terraform works with almost any platform or service with an accessible API. At this point, it communicates with the APIs of the platforms that are the service providers with the APIs it uses. When the command is entered to manage a server, database, or network device, Terraform parses the configuration code entered for the command and translates it into an API call generated for the resource provider. In addition to the infrastructure, it runs for API servers belonging to different platform providers, Terraform also benefits from the authentication mechanisms currently in use with these providers. Terraform also interprets and manages how the infrastructure should be changed to achieve the desired result.

First of all, the resources that will be used in the infrastructure and the needed resources are defined. In the next step, Terraform creates an execution plan that describes the infrastructure that it will create, update or destroy depending on the existing infrastructure and configuration, depending on the resources that will be needed on the platform. Suggested actions to be applied later are applied in order to give priority to resource dependency. For example, if the properties of a VPC are updated and the number of virtual machines in that VPC is changed, Terraform will rebuild the VPC before scaling the virtual machines.

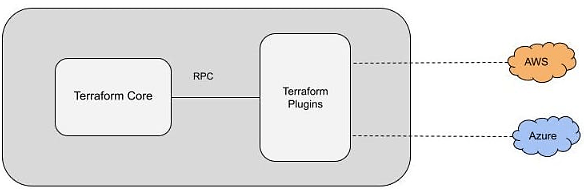

Terraform has two important components in its working structure; Terraform Core and Terraform Plugins.

- Terraform Core oversees issues such as executing the required in-source plan, creating resource graphs, and reading state management features and configuration files. The kernel consists of binaries written and compiled in the GO programming language. Each compiled binary acts as a CLI line to communicate with plugins via RPC.

- Terraform Plugins define resources for certain services. This includes authenticating infrastructure providers and initializing libraries of code used to make API calls.

Terraform modules can be used together and facilitate the management of multiple infrastructure resources. Thus, it allows complex resources to be automatically integrated with reusable and configurable structures. A module can call other modules, called submodules, which can make the installation configuration faster and shorter. Modules can be integrated and called multiple times within the same configuration or with separate configurations. Each module is actually used as a container for multiple infrastructure resources that the developer wants to group together. Modules have both input and output variables. Input variables accept values from a module that calls it. Output variables are converted to the module that calls the data. Modules can call each other, which helps to make configurations faster.

Also, each module must have a naming structure, a repository description, a standard module structure, a supported version control system, and version tags. Terraform Registry acts as a central repository for module sharing and enables Terraform modules to be discovered and distributed to users. The Registry is available in two variants. The Public Registry holds certain resources and services that interact with an API to expose and manage community-provided modules. ıt contains services for modules used internally within an organization with Private Registration.

For Terraform installation and implementation stages, please follow the bibliography links below.

Sources

https://www.terraform.io/intro/use-cases

https://medium.com/devopsturkiye/terraform-nedir-infrastructure-as-code-nedir-2-bff310cd5782

https://kerteriz.net/terraform-nedir/

https://www.mshowto.org/terraform-part-1-genel-ozellikler-ve-basic-deployment.html#close

https://www.techtarget.com/searchitoperations/definition/Terraform

https://kerteriz.net/infrastructure-as-code-iac-nedir/https://spacelift.io/blog/what-is-terraform

user-173327

9 April 2025 — 07:35

awesome